Killerbots, guided by Pine Gap, same as any other weapon?

2 April 2019

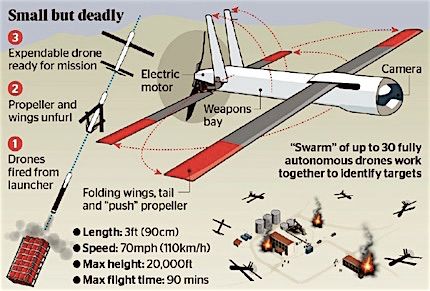

Above: Swarm of killerbots leaving a plane. Photograph from the Future of Life Institute site.

By KIERAN FINNANE

Last updated 2 April 2019, 2.49pm.

As Alice Springs hosts a military base that is intimately involved in remote warfare, Pine Gap, residents here have more than the usual stake in a debate unfolding far beyond our horizons – whether to ban or how to control Lethal Autonomous Weapons Systems, as the United Nations has dubbed them, or “killer robots” in popular parlance.

These are close to becoming a reality. The design capabilities being developed for driverless cars and AI-driven cameras are also being used for developing killer machines.

An Australian Airforce conference last year heard about nano-explosives with 10 times the force of conventional explosives that can be mounted as warheads on small drones. These drones can be manufactured in a 3D-printing process – tens of thousands a day with only 100 printers is already possible. The US and Chinese militaries are already working on launching large numbers in minutes, the conference was told.

Weaponised, they are like expendable rounds of ammunition – coming at you in swarms. They won’t be controlled, even remotely, by pilots.

Weaponised, they are like expendable rounds of ammunition – coming at you in swarms. They won’t be controlled, even remotely, by pilots.

Left: Diagram from the presentation by Dr T.X. Hammes to the 2018 Air Power Conference. Detail below.

The decision, in the conflict zone, of what to hit and what to avoid will have been programmed in at the design stage.

Deployment and targeting will still be human-directed unless – in this almost unimaginable brave new world – the killerbots get away from us.

It is the targeting function that makes the Joint Defence Facility Pine Gap relevant, as it is already, and has for a long time been, a key provider of targeting data to piloted and remotely piloted (no-one on board) weaponised airforce assets. As such, JDFPG is part of Australian and US weapons systems and is controversially involved in drone strikes in countries with which Australia is not at war.

The Australian Government, of whatever stripe, has never explained to the Australian people how this meshes with our international obligations. We are simply told “trust us, it does”.

And now the Australian Government is telling the family of nations that its existing “system of control” for the application of any military force is a sufficient safeguard in relation to Lethal Autonomous Weapons Systems (LAWS).

Its representatives were in Geneva last week, for the gathering of the Group of Governmental Experts (GGE) which this year is focussing on LAWS.

As the GGE convened, the Secretary-General of the United Nations, António Guterres, urged them to work towards the terms of a ban: “Machines with the power and discretion to take lives without human involvement are politically unacceptable, morally repugnant and should be prohibited by international law,” he said.

In his view, a ban like the one achieved against chemical weapons provided a possible framework.

However, the Australian representatives argued that no “specific ban” is required. Their document, submitted to further the GGE’s “understanding and discussions”, seems not to recognise any inherent characteristics in LAWS that would trouble the “system of control” applied to other weapons systems.

Significantly, their document did not use the term Lethal Autonomous Weapons Systems or the acronym LAWS. The word “lethal” was omitted; they spoke of Autonomous Weapons Systems or AWS.

They argued that a system of control is required for any military use of force, regardless of whether autonomous weapons are used, and went on to outline Australia’s system – “an incremental, layered approach to applying control, covering all aspects of a weapon system from design through to engagement”.

As such, it “recognises and addresses that some types of AWS and some battlespaces will require more human direction or interaction than others”.

As such, it “recognises and addresses that some types of AWS and some battlespaces will require more human direction or interaction than others”.

Conversely, some are envisaged that require less and this is what is worrying many countries, organisations and individuals around the world.

28 countries in the UN have explicitly endorsed the call for a ban on LAWS: Algeria, Argentina, Austria, Bolivia, Brazil, Chile, China, Colombia, Costa Rica, Cuba, Djibouti, Ecuador, Egypt, El Salvador, Ghana, Guatemala, Holy See, Iraq, Mexico, Morocco, Nicaragua, Pakistan, Panama, Peru, State of Palestine, Uganda, Venezuela, Zimbabwe.

And to date, 247 organisations and 3253 individuals have signed a pledge, organised by the Future of Life Institute, calling upon governments and government leaders “to create a future with strong international norms, regulations and laws against lethal autonomous weapons”.

The organisations include Google DeepMind, Silicon Valley Robotics and numerous other robotics companies as well as universities and research institutes; the individuals include Tesla CEO Elon Musk, Skype founder Jaan Tallinn, and numerous top-ranked researchers and practitioners in the AI field.

While the Australian position seems complacent in face of this building concern, of some comfort is the fact that its system envisages a degree of input from the public.

The starting point of control for any weapons system, says its document presented to the GGE, is the Australian Constitution, and compliance with domestic and international law and Government direction.

“While Government direction must be in accordance with the law, arguably it must also be in accordance with societal values and customs. This creates the broadest layer of control for any military weapon system that is considered for development, procurement or use by Australia,” says the Australian document.

However, the Australian Government’s refusal to have any kind of frank discussion with the Australian people about the military operations in which Pine Gap is involved does not exactly inspire confidence in this as an effective “layer of control”.

The GGE document also talks about Australia’s international legal obligations, which include conducting an “Article 36 Review” of a proposed weapon to consider whether it is contrary “to the public interest, to principles of humanity or the dictates of public conscience”.

This would seem to require an effort in public education in the facts and issues, followed by a public debate. But meanwhile, we have Australia advocating a position at the UN: “If states abide by similar systems of control to that of the Australian system of control, (consistent with IHL*), states’ militaries should be able to appropriately ensure that all weapon systems, including AWS, will operate in a lawful and deliberate manner without the need for a specific ban, at this time.”

Meanwhile, the Australian Defence Force has funded a large research project, looking into the development of “ethical” autonomous weapons. According to the ABC, “the wide-ranging project will investigate public perceptions of AI weaponry, how the technology could be used to enhance military compliance with the law, and the values of the people who would be designing this killing technology.”

In a future article, the Alice Springs News Online will try to get answers on how the public will be engaged by this project.

*IHL – international humanitarian law, which dictates what can and cannot be done during an armed conflict.

Humanity is becoming too clever for its own good.

No Pine Gap – No Alice Springs – Get your 30,000 plus sunscreen ready.

I hope this becomes the plot for Pine Gap season 2.

Thanks for the very informative article Kieran, much to ponder.

Thanks Kieran. It seems to me it would be better to be directing money and technology into projects promoting peace and harmony … sigh!

Good, informative article. Be aware, though, cruise missiles are fully autonomous once launched. There is no doubt, however, that LAWS must be banned. If for no other reason than, with current computer technology and programming, no weapons system is bug free.

Thanks for this very informative article Kieran. The use of killer robots (or Lethal Autonomous Weapons Systems (LAWS)) is most concerning – and something that all Australians should be concerned about.

I support the Secretary-General of the United Nations, António Guterres, call for a ban on LAWS.

There is a global campaign to stop killer robots, and a campaign team here in Australia – who are seeking widespread support. We can all get involved in this.

https://safeground.org.au/what-we-do/campaign-to-stop-killer-robots/